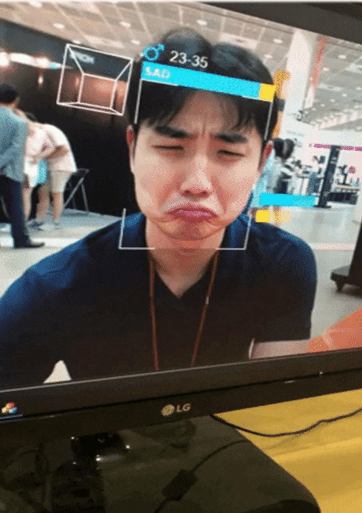

Can AI really understand our emotions ?

On this page :

How can we know if an elderly person is suffering in silence? How can we detect stress or anxiety in a patient with Alzheimer’s disease when they can no longer clearly express how they feel? For many people, especially seniors or those living with neurodegenerative disorders, emotions are an essential indicator of well-being, yet often difficult to perceive.

This is precisely the issue addressed by researchers at the CESI LINEACT research unit. Through their work in artificial intelligence and computer vision, they explore how AI can become a more refined, nuanced, and human-centered support tool. A recent research study presented at the international conference ICIAP 2025, led by Amine Bohi and Varsha Devi, proposes an innovative approach to overcoming current limitations in automatic emotion recognition.

Why the Face Is Not Enough

“We often think that recognizing an emotion simply means recognizing a facial expression. In reality, it’s much more complex than that,” explains Varsha Devi, a computer vision researcher at CESI LINEACT.

Facial analysis alone is insufficient for three main reasons:

- The face is a clue, not proof.

An expression can be misleading. We smile out of politeness; we hide our worries. The face alone does not tell the whole story and can lead to misinterpretations.

- The case of “neutral faces.”

The issue is particularly significant for people with cognitive disorders. Their expressions are often subtle, neutral, or dominated by negative emotions (sadness, anxiety) that may mask their true inner state.

- The risk of misinterpretation.

When relying solely on these ambiguous signals, traditional AI models often produce oversimplified or inaccurate classifications. This observation well documented in neuropsychiatry and neurology, makes it necessary to look beyond the face for additional cues.

The face provides clues, but it is never absolute proof. If we stop there, we risk being wrong

Summarizes Amine Bohi, Associate Professor and Researcher at CESI LINEACT.

Context: A False Good Idea ?

To improve emotion recognition, one idea naturally emerged: adding context. After all, emotions never exist in isolation. They depend on a place, a situation, an environment. Yet here too, researchers identified a trap.

Consider a simple example. A person with a neutral expression photographed in a hospital will very often be interpreted as sad by an AI model. The same person, with exactly the same expression, in a park or at a festive event, may be judged serene or happy. In this case, the AI is no longer reading the person’s emotion, it is reading the setting.

“The risk is that the algorithm mainly learns shortcuts: hospital = sadness, party = joy. It then projects stereotypes onto individuals,” as highlighted in the research conducted by Amine Bohi and Varsha Devi.

This phenomenon is known as context bias. It occurs when AI gives more importance to the environment than to the individual.

The consequence is significant: the model does not learn to interpret the person’s real emotion. Instead, it learns to create “statistical shortcuts,” associating a place (hospital) with a stereotyped emotion (sadness). Rather than helping, the technology ends up projecting prejudices onto individuals.

From this observation arises the central research question:

How can context be integrated without overpowering the individual ?

A Key Idea: Separate to Better Understand

The solution proposed by CESI LINEACT researchers is both simple and ingenious: separate in order to better understand. Their model analyzes the face and the context but not together at first.

On one side, the face is studied as the primary source of information about the individual. On the other, the context is analyzed separately, with the face deliberately masked. This forces the AI to examine only the environment, without relying on facial expression.

This is where the key mechanism comes into play: context “denoising.” In this project, the term does not refer to removing sound noise, but to reducing contextual bias. The mechanism is specifically designed to prevent the model from relying on statistical shortcuts that blindly associate a place with an emotion.

To achieve this, contextual information undergoes a complex process in which it is first transformed, sometimes even “noised” before its influence is corrected and partially subtracted. The model uses facial signals as a guide: if the face expresses an emotion that strongly contradicts the stereotype associated with the scene, the algorithm learns to trust the individual more than the environment.

In other words, the AI is trained to ask itself a simple question:

Is the setting truly explaining the emotion, or is it misleading me?

Finally, the information derived from the face and the “denoised” context is fused. This process enables more balanced, realistic, and fair emotion recognition, capable of adapting to a wide variety of real-world situations. This methodological advancement is a fundamental building block paving the way for more robust practical applications.

At this stage, the work remains fundamental research conducted within the CESI LINEACT research unit. The goal is not to immediately deliver a medical tool, but to establish solid, scientifically reliable foundations for future applications.

Nevertheless, these findings open significant perspectives, particularly in healthcare and support for vulnerable individuals. Better detection of stress, discomfort, or emotional distress could, in the future, help professionals intervene earlier and in a more appropriate manner.

By teaching AI not to be misled by appearances, CESI researchers remind us of an essential truth: understanding human emotions requires nuance, context and above all, humility. A valuable lesson for artificial intelligence, and for us as well.